set.seed(19941129)

graded_question <- sample(1:9,size = 1)

paste("Question",graded_question,"is the graded question for this week")[1] "Question 3 is the graded question for this week"Your Name HERE

September 4, 2025

In this lab we’ll continuing exploring the COVID-19 data for the U.S.

We covered a lot of ground in class. Conceptually, talked about how to

To do this, we copied and pasted a lot of code. Today, we’ll get practice writing our own code. Specifically we will

One of these tasks (excluding the weekly survey) will be randomly selected as the graded question for the lab.

html fileinstall.packages() function to install the lubridate and DT package packageslubridate is installed, comment the code out by placing a # at the start of the lineThe 'lubridate package is a collection of functions that makes working with dates and time more simple.

The DT package allows us to display data tables in a searchable format in our html output.

library() to load the following packages

tidyverseCOVID19lubridateDT── Attaching core tidyverse packages ──────────────────────── tidyverse 2.0.0 ──

✔ dplyr 1.1.4 ✔ readr 2.1.5

✔ forcats 1.0.0 ✔ stringr 1.5.1

✔ ggplot2 3.5.2 ✔ tibble 3.3.0

✔ lubridate 1.9.4 ✔ tidyr 1.3.1

✔ purrr 1.1.0

── Conflicts ────────────────────────────────────────── tidyverse_conflicts() ──

✖ dplyr::filter() masks stats::filter()

✖ dplyr::lag() masks stats::lag()

ℹ Use the conflicted package (<http://conflicted.r-lib.org/>) to force all conflicts to become errorsOpen up the slides from last class and copy and paste the relevant code into the code chunk below.

Specifically please do the following:

territories that is a vector containing the names of U.S. territories<- and c() (slidesCreate a new dataframe, called covid_us, by filtering out observations from the U.S. territories - Use<-,%>%,filter(),!and%in%` (slides

Create a state variable that is a copy of the administrative_area_level_2

%>%, mutate() and ->new_cases from the confirmed. Create a variable called new_cases_pc that is the number of new Covid-19 cases per 100,000 citizens\<-,%\>%,filter(),!and%in%\ (slidesface_masks from the facial_coverings variable. (slides# ---- 1. Create territories object

territories <- c(

"American Samoa",

"Guam",

"Northern Mariana Islands",

"Puerto Rico",

"Virgin Islands"

)

# ---- 2. Create covid_us data frame

covid_us <- covid %>%

filter(!administrative_area_level_2 %in% territories)

# ---- 3-5. Recode variables in covid_us

covid_us %>%

mutate(

state = administrative_area_level_2,

) %>%

dplyr::group_by(state) %>%

mutate(

new_cases = confirmed - lag(confirmed),

new_cases_pc = new_cases / population *100000

) %>%

mutate(

face_masks = case_when(

facial_coverings == 0 ~ "No policy",

abs(facial_coverings) == 1 ~ "Recommended",

abs(facial_coverings) == 2 ~ "Some requirements",

abs(facial_coverings) == 3 ~ "Required shared places",

abs(facial_coverings) == 4 ~ "Required all times",

) %>% factor(.,

levels = c("No policy","Recommended",

"Some requirements",

"Required shared places",

"Required all times")

)

) -> covid_usNext let’s compare our new face_mask variable to the original facial_coverings variable using the table command.

Uncomment the following code and replace the generic terms data, variable1, and variable2 with appropriate terms.

-4 -3 -2 -1 0 1 2 3 4

No policy 0 0 0 0 3893 0 0 0 0

Recommended 0 0 0 275 0 8604 0 0 0

Some requirements 0 0 7362 0 0 0 17424 0 0

Required shared places 0 5897 0 0 0 0 0 9191 0

Required all times 410 0 0 0 0 0 0 0 622You’ve just created a “crosstab” a frequency table showing the joint distribution of two variables.

Crosstabs are powerful tools for getting a sense of your data and for checking whether a recode did what you wanted it to do.

Finally, let’s create a few more variables called year_month from the date variable and a variable describing the percent of a state’s population that is fully vaccinated (percent_vaccinated), which we’ll use later in the lab.

Highlight the commented code below from # covid_us %>% to # ) -> covid_us and press shift + cmd + C on a mac or shift + ctrl + C on PC to uncomment the code.

year(date) extracts the year from our date variable and saves it in new column called yearmonth(date) extracts the month from our date variable and saves it in a new column called monthpaste() command pastes these two variables together, with the str_pad() adding a leading 0 to single digit months.From the documentation of the COVID19 package, we see that the numeric values of the facial_coverings correspond to following substantive policy regimes:

These data come from the Oxford Covid-19 Government Response Tracker. Oxford distinguishes between policies that are in effect for the entire administrative unit (e.g. the State of New York) and policies that may be in effect in only parts of the administrative unit (e.g. New York city)

In short: positive integers identify policies applied to the entire administrative area. Negative integers are used to identify policies that represent a best guess of the policy in force, but may not represent the real status of the given area. The negative sign is used solely to distinguish the two cases, it should not be treated as a real negative value.

Let’s get some practice using the filter(), select() group_by() and summarize(), and n() commands from dplyr package to understand how common each these negative values are in our data.

# A tibble: 4 × 4

state n earliest_date latest_date

<chr> <int> <date> <date>

1 Illinois 156 2020-10-01 2021-05-15

2 Massachusetts 35 2020-10-02 2020-11-05

3 South Carolina 61 2020-10-13 2020-12-12

4 Maryland 158 2020-11-06 2021-04-12 covid_us %>% tells R that every line of code after will use covid_us dataframefilter(facial_coverings == -4) %>% tells R to filter out only the rows where the facial coverings variable equals -4select(date, state) %>% tells R to select the columns named date and stategroup_by(state) %>% tells R that subsequent commands should be done separately for each unique value of statesummarize( tells R we want to summarize the output of susequent commandsn = n(), tells R to count the number of observations (state-dates) for each state that had a value of -4 on the facial_coverings variableearliest_date = min(date), tells R to report the earliest date that each state had a value of -4latest_date = max(date), tells R to report the last date that each state had a value of -4)%>% tells R we’re finished with the summarize() functionarrange(earliest_date) arranges the data in asscending order from earliest to latest start dateYou may find this cheatsheet useful and you can find a more detailed discussion here

Substantively, what does the previous chunk of code tell us?

Filtering data, selecting specific variables, and summarizing variables are important skills that let us “know our data”

To make sure we understand what this policy variable facial_coverings is measuring, let’s download the source data from Oxford

Rows: 56992 Columns: 81

── Column specification ────────────────────────────────────────────────────────

Delimiter: ","

chr (32): CountryName, CountryCode, RegionName, RegionCode, Jurisdiction, C1...

dbl (48): Date, C1_School closing, C1_Flag, C2_Workplace closing, C2_Flag, C...

lgl (1): M1_Wildcard

ℹ Use `spec()` to retrieve the full column specification for this data.

ℹ Specify the column types or set `show_col_types = FALSE` to quiet this message.Now let’s look at the policy on face masks for Illinois. From Oxford’s codebook, we learn that variables describing face mask policies all begin with the prefix H6_

Using common prefixes for a variable is a good habit that will help you organize and work with your data

# A tibble: 8 × 4

date `H6_Facial Coverings` H6_Flag H6_Notes

<date> <dbl> <dbl> <chr>

1 2020-08-21 2 1 "In Executive Order 2020-52, Executi…

2 2020-08-26 2 1 "Effective from 26 August 2020, the …

3 2020-09-18 2 1 "On 18 September, in Executive Order…

4 2020-10-01 4 0 "Originally coded a 3T, but looking …

5 2020-10-16 4 0 "In Executive Order (EO) 2020-59, Go…

6 2020-11-13 4 0 "Noting that Executive Order 2020-71…

7 2020-11-20 4 0 "Executive Order 2020-73 requires pe…

8 2020-12-01 3 1 "Chicago seems to have changed its g…oxford_us %>% Tells R to use the Oxford policy datamutate(date = ymd(Date))%>% Creates a date variable of class date from the original Date variable (which was class numeric)filter(RegionName == "Illinois", subsets the data to just Illinoisdate > "2020-08-01", filters out dates before August 1, 2020date < "2021-01-01", filters out observations with dates after January 1,2021!is.na(H6_Notes)) %>% filters out observations without notes (which appear in the data when policy changes)select(date,starts_with("H6_")) -> il_facemasks Selects just the date and notes variables and saves them to an object called il_facemasksil_facemasks prints the obejct in the consoleLet’s take a look at the H6_Notes variable for 2020-09-18

[1] "On 18 September, in Executive Order 2020-55, the Governor reissued most executive orders, extending a majority of the provisions through 17 October 2020. This includes mask requirements. https://web.archive.org/web/20200922144918/https://www2.illinois.gov/Pages/Executive-Orders/ExecutiveOrder2020-55.aspx"Now update the code to select H6_Notes variable for 2020-10-01

[1] "Originally coded a 3T, but looking at the below description, which includes even residential buildings, it is hard to conceive of a time outside the home when a Chicago resident would not be required to wear a mask. The Phase IV \"Gradually Resume\" guidelines seem not to provide any significant exemption (https://archive.fo/dOyY9). Hence code moves up to 4T. Effective October 1, 2020, residents of Chicago are required to wear masks in all public places. “Any individual who is over age two and able to medically tolerate a mask shall be required to wear a mask when in a public place, which for purposes of this Order includes any common or shared space in: (1) a residential multi-unit building or (2) any non-residential building, unless otherwise provided for in the Phase IV: Gradually Resume guidelines promulgated by the Office of the Mayor (\"Gradually Resume Guidelines\")” Additionally, but separately, “Individuals must, at all times and as much as reasonably possible, maintain Social Distancing from any other person who does not live with them.” https://web.archive.org/web/20201116163255/https://www.chicago.gov/content/dam/city/sites/covid/health-orders/CDPH%20Order%202020-9%20-%205th%20Amended%20FINAL%209.30.20_AAsigned.pdf"face_mask policyIn Illinois, the -4’s seem to correspond to more stringent mask policies implemented in Chicago relative to the rest of the state. So by collapsing negative and positive values of facial_coverings to construct our face_mask variable, we’re probably over stating the extent the extensiveness of policies in effect.

So we should be cautious in how we interpret our collapsed variable, face_mask. Perhaps we could construct another variable that distinguished state-level policies from more localized policies, or we could only look at cases where there was a uniform state policy.

In class, we kind of rushed through our discussion of descriptive statistics. Let’s take a little time to review these concepts in more detail and see how to calculate them in R.

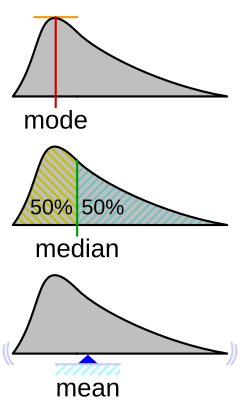

Measures of central tendency describe what a typical value of some variable. In this course, we’ll use three measures of what’s typical:

One of the most frequent measures of central tendency we’ll use in this course is a mean or average.

Suppose we have \(n\) observations of some variable \(x\). We can calculate the mean of \(\bar{x}\) (“x bar), by adding up all the values of x

[ {x}=_{i=1}^n x_i ]

We’ll see later in the course that means are closely related to the concept of expected values in probability and that conditional means (which we’ll calculate below) are central to thinking about linear regression.

For now, please calculate the mean (average) number of new cases per 100,000 residents in our data:

Last class, when we calculated the the average number of new cases under each type of face mask policy, we were calculating a conditional mean the mean of some variable, conditional on some other variable taking a specific value.

Formally, you’ll often see this written in terms of Expected Values: Something like

[ E[Y|X=x] ]

Or to make it more concrete:

[ E[ | ] ]

In code, we could accomplish this manually, using the index operator:

new_cases_pc when face_masks equals “Recommend”By using group_by() with summarise() we can accomplish this more quickly:

The median is another measure of what’s typical for variables that take numeric values

Imagine, we took our data new Covid-19 cases and arranged them in ascending order, from the smallest value to highest value

The median would be the value in the exact middle of that sequence, also known as the 50th percentile.1

Formally, we can define that median as:

[ M_x = X_i : ^{x_i} f_x(X)dx=^f_x(X)dx=1/2 ]

Which might look like Greek to you, which is fine. Just think of it as the middle value.

median() function. How does it compare to the mean?Interesting the median is much lower than the mean. If we were to look at a histogram of our data (more on that next week; think of it as a graphical representation of a frequency table), we see that the new_cases_pc has a “long tail” or is skewed to the right. Most of the values are close to 0, but there are few cases that are extreme outliers.

Medians are less influenced by outliers than means

Conceptually, a mode describes the most frequent outcome.

Modes are useful for describing what’s typical of “nominal” or categorical data like our measure of face mask policy.

To calculate the mode of our face_masks variable, wrap the output of table() with the sort() function

Required all times No policy Recommended

1032 3893 8879

Required shared places Some requirements

15088 24786 For numeric data, modes correspond to the peak of a variable’s density function (more on this later in the class).

You can get a sense of the relationship between, means, median’s and modes from this helpful figure from Wikipedia:

Measures of dispersion describe how much the data “vary.” Let’s discuss the following ways we can summarize how our data vary:

The range of a variable is simply it’s minimum and maximum value

new_cases_pc using the range() functionThe \(p\)-th percentile is the value of the observation such that 100*p percent of the data are to the left and 100-100*p are two the right.

[ p_x = X_i : ^{x_i} f_x(X)dx= p; ^f_x(X)dx=1-p ]

The median is just the 50th percentile

In R we calculate the \(p\)-th percentile using the quantile() setting the probs argument to the \(p/100\) percentile that we we want.

quantile() function to calculate the 25th and 75th percentiles of the new_cases_pc variable.The 25th and 75th percentile define the “Interquartile Range” where 50 percent of the observations lie within this range, and 50 percent lie outside the range.

Variance describes how much observations of a given measure vary around that measure’s mean.

The variance in a given sample is calculated by taking the average of the sum of squared deviations (i.e. differences) around a measure’s mean.

[ ^2_x=_{i=1}n(x_i-{x})2 ]

Use the var() function to calculate the variance of the new_cases_pc variable.

[1] 3570.208[1] 3570.208Variance will be important for thinking about uncertainty and inference (e.g. how might our estimate have been different)

A standard deviation is simply the square root of variable’s variance.

[ _x== ]

Standard deviations are easier to interpet because their units are the same as variable.

Think of them as a measure of the typical amount of variation for variable.

Again, let’s use the sd() function to calculate the standard deviation of the new_cases_pc variable

Measures of association describe how variables relate to each other.

Covariance describes how two variables “co-vary”.

When \(x\) is above its mean, \(y\) also tends to be above it’s mean, these variables have a positive covariance.

If when \(x\) tends to be high, \(y\) tends to be low, these variables have a negative variance

Formally, the sample2 covariance of two variables can written as follows:

\[ cov(x,y)=\frac{1}{n-1}\sum\_{i=1}\^n(x_i-\bar{x})(y_i-\bar{y})\]

percent_vaccinated) and new_cases_pc using the var() functionLike variances, covariances don’t really have intrinsic meaning, since x and y can be measured on very different scales.

The correlation between two variables takes their covariance and scales this by the standard deviation of each variable, creating a measure that can range from -1 (perfect negative correlation) to 1 perfect positive correlation.

Again, we can write this formally

\[ \rho\_{x,y} = \frac{cov(x_y)}{\sigma_x,\sigma_y} \]

But don’t sweat the formulas too much. I’m just contractually obligated to show you math.

percent_vaccinated) and new_cases_pc using the cor() function.You’ll need to set the argument use="complete.obs

Hmm… That seems a little strange. What if we calculated the correlation between vaccination rates and new cases separately for each month in 2021

`summarise()` has grouped output by 'year'. You can override using the

`.groups` argument.# A tibble: 24 × 4

# Groups: year [2]

year month mn_per_vax cor

<dbl> <dbl> <dbl> <dbl>

1 2021 1 0.988 -0.304

2 2021 2 6.01 -0.306

3 2021 3 14.8 0.0107

4 2021 4 28.3 0.0503

5 2021 5 40.9 -0.102

6 2021 6 47.4 -0.206

7 2021 7 50.3 -0.290

8 2021 8 52.4 -0.334

9 2021 9 55.4 -0.288

10 2021 10 57.8 -0.146

# ℹ 14 more rowsLet’s return to the question of the average number of new Covid-19 cases (per 100,000) for different types of face mask policies.

We ended our previous class with this result:

# A tibble: 5 × 2

face_masks new_cases_pc

<fct> <dbl>

1 No policy 10.3

2 Recommended 16.6

3 Some requirements 36.2

4 Required shared places 29.4

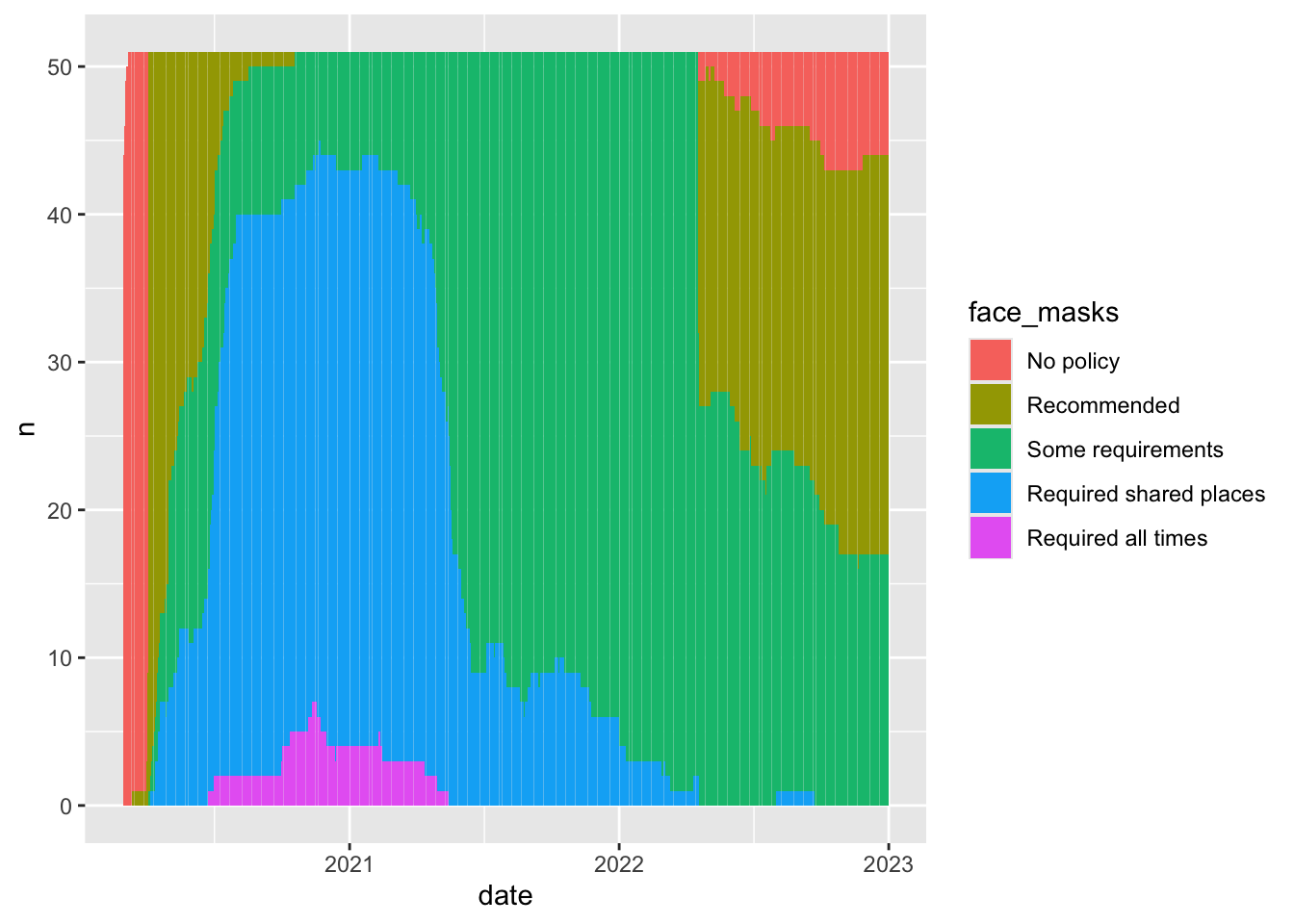

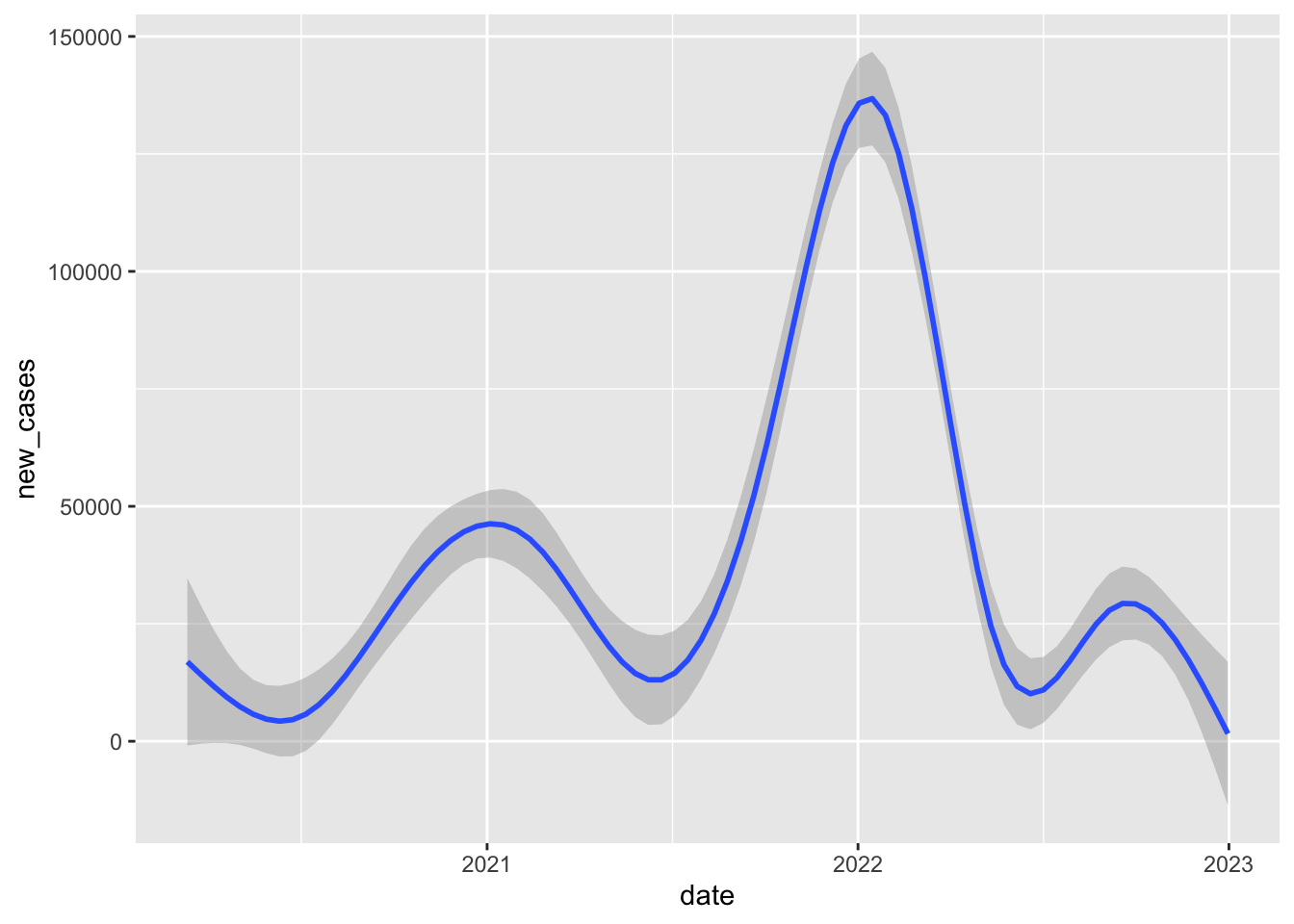

5 Required all times 32.2Probably not that much. Different Face mask policies are implemented at different times in the pandemic. For example, by 2021, almost all states have some requirements. Comparing the average for new cases in states with no policy to states with full requirements, is comparing the state of world in early 2020 to the state of the world in late 2020 to mid 2021. But lots of things differ between these periods. Other policies are also going into effect, new variants are emerging.

In short, those simple conditional means across the full data don’t really provide an apples to apples comparison.

`summarise()` has grouped output by 'date'. You can override using the

`.groups` argument.

`summarise()` has grouped output by 'date'. You can override using the

`.groups` argument.

`geom_smooth()` using method = 'gam' and formula = 'y ~ s(x, bs = "cs")'Warning: Removed 17 rows containing non-finite outside the scale range

(`stat_smooth()`).

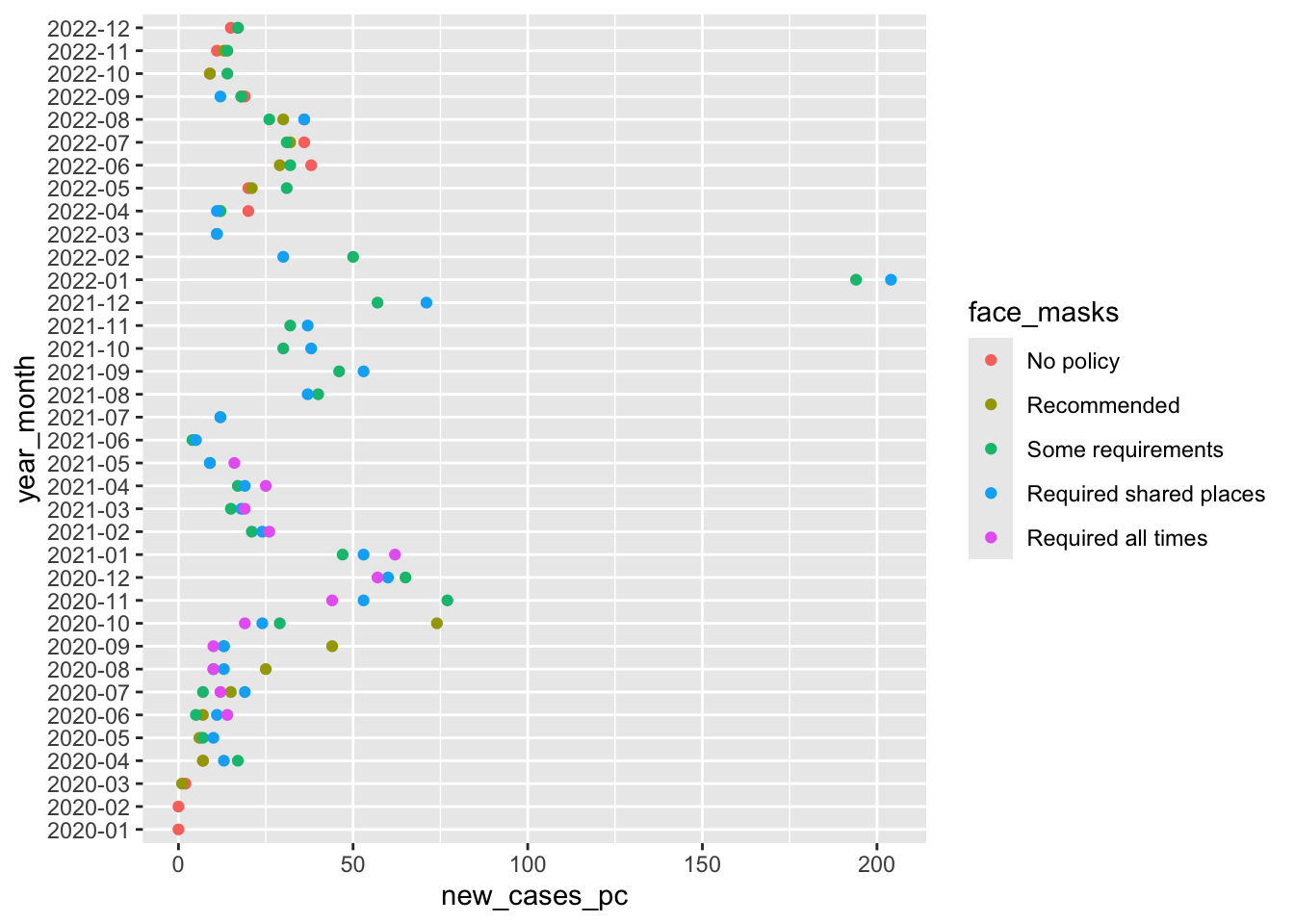

If we limit our comparison to a more narrow time period, say one month in one year, we’re making a fairer comparison between states that are likely facing more similar conditions/challenges.

So when we compare states in September 2020, we see that rates of new cases tend to be much higher in states with only recommend face mask policies compared to states with at least some requirements.

# A tibble: 4 × 2

face_masks new_cases_pc

<fct> <dbl>

1 Recommended 43.9

2 Some requirements 13.5

3 Required shared places 13.0

4 Required all times 10.1cases_by_month_and_policy`summarise()` has grouped output by 'year_month'. You can override using the

`.groups` argument.cases_by_month_and_policy in a searchable tablecases_by_month_and_policyWhat does this figure tell us?

So this figure graphically displays the data cases_by_month_and_policy

From about August 2020 to October 2020 states with facemask requirements saw much lower rates of new cases than states that only recommended face masks.

After October 2020, every state has at least some requirement, and the differences between the stringency of requirements is a little harder to see.

Again this stuff is complicated. Lots of things are changing and these month comparisons are by no means perfect. Lot’s of things differ between states with different mask policies. What we’d really like to know is a sort of counterfactual comparison between the number new cases in a state with a given policy and what those new cases would have been had that state had a different policy.

The problem is, we don’t get to see that counterfactual outcome. So how can we make causal claims about the effects of facemasks, or any other policy that interests us? Finding creative ways to answer these questions is the key to making credible causal claims.

Next week, we’ll explore how to make this figure and many more from our data

Like lecture, we covered a lot in this first lab. Specifically we:

%>%, the pipe command, to chain together multiple functionsfilter() to filter data based on logical comparisonsselect() to select specific variables from our datagroup_by() to apply functions by one or more grouping variablesmutate() to create new columns in our datasummarise() to summarize the output of functions, often by groupsarrange() to sort data by a specific variablemeans, medians and modes to describe typical values of dataranges, percentile ranges, variances and standard deviations to describe how our data varycovariances and correlations to describe relationships between variables in our dataNot all of this will make sense the first time through. That’s OK. The things we’ve done today, we will do again and again over the course of the semester. Overtime, concepts that seemed crazy or confusing, we’ll become second nature.

After class, on the course website and canvas, you’ll find my “commented” solutions today’s lab.

If there are particular parts of the lab where we went too fast, or things didn’t make sense. Take a moment to review these notes. Try re-running the code. Changing the code. Break the code and see if you can fix it.

When you encounter a problem you can solve, send me an email, or ask your friends, or doctor Google. I guarantee you, someone else has had a similar question or problem.

The only dumb question in this course is a question you don’t ask!

Please upload the html file produced by your .Rmd file to Canvas

Finally, please take a moment to complete this weeks class survey

It’s a little more complicated as we need to decide how to handle situations where their are ties, or an even number of cases. For now we’ll just accept the default rules R uses.↩︎

Astute readers might ask, why are you talking about samples? We’ll come back to this later in the course when we talk about probability, estimation and statistical inference.↩︎